2024 - Present

Automated Pediatric Lung Sound Analysis

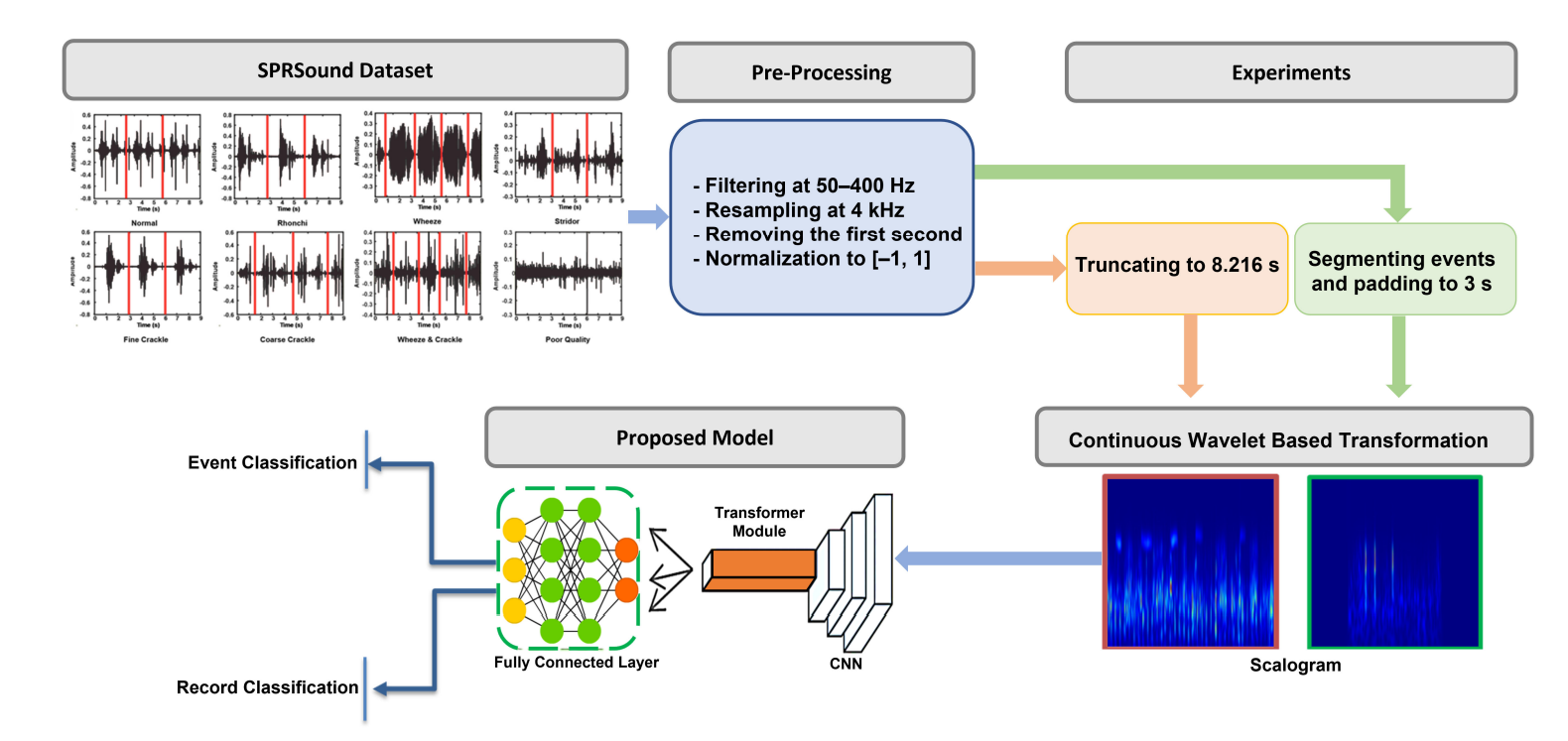

A stethoscope is one of the most common tools in medicine, but using one to diagnose a child requires years of specialized training. To make this expertise more accessible, we developed an AI system that can automatically detect signs of respiratory distress in pediatric patients. Our method works by converting lung sound recordings into visual representations and then using a sophisticated neural network to scan for patterns associated with disease. Unlike previous attempts that often missed subtle cues, our model uses a "global-local" perspective to ensure no detail is overlooked.

The results show that this approach is notably more accurate than previous state-of-the-art systems. This tool is designed to empower doctors and nurses by providing a fast and data-driven way to screen for pneumonia and other lung infections. Our goal is to integrate this technology into digital stethoscopes to help save lives in regions where pediatric specialists are not always available.